Huw Dixon

The Generalized Taylor Economy (GTE)

A Generalized Taylor Economy (GTE) is one where there are many sectors: within each sector there are contracts of a particular duration, and these operate according to Taylor's (1980) staggered contracts model. So, if the longest duration of contracts is \(F\) periods, there will be up to F sectors \(i=1 \ldots F\). In sector\(i\), all contracts (price-spells) last for \(i\) periods, and each period a proportion of \(\frac{1}{i}\) prices will be reset. The share of each sector is \(\alpha_i \ge 0\), with \(\sum_{i=1 \ldots F}\alpha_i = 1\)

A simple Taylor economy is simply one where only one sector has a strictly positive share of the prices: \(\alpha_F=1\). For example, in a Taylor 2 economy: \(\alpha_1=0,\alpha_2=1\). In a GTE, there are many sectors with strictly positive shares. We can think of these “sectors” as duration sectors. For example, a firm might set a price for one period and then for four periods. This firm would then move between the \(i=1\) sector and the \(i=4\) sector. The key point is the Taylor assumption that when they set their price, firms know how long it is going to last.

The great advantage of the GTE is that the sector shares can be chosen to reflect any possible distribution of durations: indeed, it can be exactly calibrated to match the empirical distribution. Furthermore, when it is plugged into a macroeconomic model it also gives nice inflation dynamics, particularly for inflation: the response of inflation to monetary policy follows a nice hump shape. Hence the GTE provides a way of getting good dynamics that is consistent with the microdata on prices and/or wages. This contrasts with many existing models which are either inconsistent with the microdata on prices or do not give nice inflation dynamics.

The appropriate distribution corresponding to the GTE is derived in general by Dixon (2012), and earlier versions. My own inspiration for starting research on the GTE was the following quote from John Taylor (which Engin Kara and I put at the start of our first paper on the GTE):

"There is a great deal of heterogeneity in wage and price setting. In fact, the data suggest that there is as much a difference between the average lengths of different types of price setting arrangements, or between the average lengths of different types of wage setting arrangements, as there is between wage setting and price setting. Grocery prices change much more frequently than magazine prices – frozen orange juice prices change every two weeks, while magazine prices change every three years! Wages in some industries change once per year on average, while others change per quarter and others once every two years. One might hope that a model with homogenous representative price or wage setting would be a good approximation to this more complex world, but most likely some degree of heterogeneity will be required to describe reality accurately."

John B Taylor, (1999) Staggered Wage and Price Setting in Macroeconomics in: J.B.Taylor and M.Woodford, eds, Handbook of Macroeconomics, Vol. 1, North-Holland, Amsterdam.

Date | Event | Working Paper/Mimeo1 | Publication |

1979 | The Simple Taylor economy is born. | John B Taylor (1979), 'Staggered wage setting in a macro model'. American Economic Review, Papers and Proceedings 69 (2), pp. 108–13 | |

1980 | Taylor, John B, 1980. "Aggregate Dynamics and Staggered Contracts," Journal of Political Economy, 88(1), pages 1-23, February. | ||

1994 | 8 sector GTE, including estimates of shares. | Taylor, J B (1994), Macroeconomic Policy in a World Economy, Norton. | |

2005 | First general formulation of GTE and application to output persistence with 20 sector GTE, calibrated using Bils-Klenow 2004. Derivation of DAF for Calvo distribution. | Huw Dixon and Engin Kara (2005): Persistence and nominal inertia in a generalized Taylor economy: how longer contracts dominate shorter contracts, ECB working paper 489. Huw Dixon & Engin Kara, 2005. " How to Compare Taylor and Calvo Contracts: a comment on Michael Kiley," CDMA Working Paper Series 0504 | Dixon H and Kara E (2011).Contract Length Heterogeneity and the Persistence of Monetary Shocks in a Dynamic Generalized Taylor Economy, European Economic Review, 55, 280-292 Dixon, H and Kara, E (2006): "How to Compare Taylor and Calvo Contracts: A Comment on Michael Kiley", Journal of Money, Credit and Banking., 38, 1119-1126. |

2006 | Steady-state identities and derivation of “new” cross-sectional DAF. Unified framework for time-dependant pricing. Estimates a 4 sector GTE from macrodata for US and Germany. Analysis of optimal monetary policy in 20 sector GTE using Bils-Klenow 2004. 20 sector GTE to Inflation Persistence, calibrated using Bils-Klenow 2004. | Huw Dixon (2006): The distribution of contract durations across firms: a unified framework for understanding and comparing dynamic wage and price setting models, ECB working paper 676. Coenen G, Levin AT, Christoffel K (2006), Identifying the influences of nominal and real rigidities in aggregate price-setting behavior, mimeo Engin Kara (2006) Optimal Monetary Policy in the Generalised Taylor Economy, ECB working paper 673 Huw Dixon and Engin Kara (2006), Understanding inflation persistence: a comparison of different models, ECB working paper 672. | Dixon H. (2012), A unified framework for using micro-data to compare dynamic time-dependent price-setting models, BE Journal of Macroeconomics (Contributions), volume 12. Coenen G, Levin AT, Christoffel K (2007), Identifying the influences of nominal and real rigidities in aggregate price-setting behavior, Journal of Monetary Economics, 54, 2439-2466 Kara, E (2010). Optimal Monetary Policy in the Generalised Taylor Economy, Journal of Economic Dynamics and Control. 34, p. 2023–2037 Dixon H , Kara E (2010): Can we explain inflation persistence in a way that is consistent with the micro-evidence on nominal rigidity,. Journal of Money, Credit and Banking, 42, 151-170. |

2007 | Monte-Carlo study of estimation method for DAF with censoring - shows Hazard based estimate the best. | Gabriel P and Reiff A. (2007) Estimating the degree of price stickiness in Hungary: a hazard based approach, Mimeo, Central Bank of Hungary. | |

2008 | Bayesian estimation of 8 sector GTE from macro-data using micro priors. | Carlos Carvalho and Niels Arne Dam (2008), Estimating the Cross-sectional Distribution of Price Stickiness from Aggregate Data. Mimeo. | |

2009 | Optimal policy in GTE contrasted with models with Sticky information and indexed Calvo. | Engin Kara, 2009. "Micro data on nominal rigidity, inflation persistence and optimal monetary policy," Working Paper Research 175, National Bank of Belgium. | Engin Kara, 2011. "Micro-Data on Nominal Rigidity, Inflation Persistence and Optimal Monetary Policy," The B.E. Journal of Macroeconomics, vol. 11(1). |

2010 | Use of GTE and Generalized Calvo in Smets-Wouters (2003) model. Calibrated using French wage and price data. | Huw D. Dixon & Hervé Le Bihan, 2010. "Generalized Taylor and Generalized Calvo Price and Wage-Setting: Micro Evidence with Macro Implications," CESifo Working Paper Series 3119. | Huw Dixon & Hervé Le Bihan, 2012. Generalised Taylor and Generalised Calvo Price and Wage Setting: Micro‐evidence with Macro Implications," Economic Journal, vol. 122(560), pp, 532-555. |

2011 | Estimation and comparison of GTE with other models using Bayes Factors, calibrated with micro-data. Uses GTE to explain reset and aggregate price inflation. | Huw Dixon & Engin Kara, 2011. "Taking Multi-Sector Dynamic General Equilibrium Models to the Data," Bristol Economics Discussion Papers 11/621. Engin Kara, 2011. "Understanding and Modelling Reset Price Inflation" Bristol Economics Discussion Papers 11/623. | Engin Kara, 2015 "The Reset Inflation Puzzle and Heterogeneity in Price Stickiness", Journal of Monetary Economics, Journal of Monetary Economics, November 2015. |

1 These are the first verifiable publication that is available on the web. Of course, many of these working papers and mimeos will have been presented at conferences in earlier versions. These papers also exist in many different versions: look up the authors in RePEc for the full listing.

If anyone has more papers not included in this listing, please contact me at dixonh@cardiff.ac.uk

Estimated GTEs using Macrodata

This table brings together some estimates primarily based on fitting macroeconomic data.

| Taylor 1994 (wages) | Ceonen et al (2007) | Carvalho and Dam (2010) version. |

|||||||

Empiric |

Ex Post(Mean) |

||||||||

Duration (quarters) |

US |

US: benchmark |

Germany: benchmark. |

US: |

Japan: |

Denmark |

US |

Japan |

Denmark |

1 |

0.020 |

0.19 |

0.33 |

0.273 |

0.311 |

0.136 |

0.276 |

0.307 |

0.295 |

2 |

0.104 |

0.00 |

0.21 |

0.071 |

0.204 |

0.179 |

0.086 |

0.085 |

0.064 |

3 |

0.189 |

0.13 |

0.12 |

0.098 |

0.043 |

0.045 |

0.027 |

0.070 |

0.045 |

4 |

0.228 |

0.68 |

0.34 |

0.110 |

0.045 |

0.091 |

0.037 |

0.061 |

0.047 |

5 |

0.200 |

0.059 |

0.070 |

0.124 |

0.156 |

0.057 |

0.086 |

||

6 |

0.132 |

0.129 |

0.046 |

0.067 |

0.144 |

0.069 |

0.097 |

||

7 |

0.077 |

0.061 |

0.039 |

0.108 |

0.143 |

0.151 |

0.198 |

||

8 |

0.048 |

0.198 |

0.244 |

0.250 |

0.132 |

0.199 |

0.169 |

||

Taylor (1994): Estimated using Maximum Likelihood for US data 1961:4–1977:4. Source: Table 2-1, Page 47.

Coenen at al (2007), Table 1 page 2455. US data 1983-2003, German data 1975– 1998.

Carvalho and Dam (2010). These are first three columns the “empirical distributions” from Table 2 (derived from micro data) and the second three the ex post means from Tables 3-5 using Bayesian estimation using macro-data (1977-2007).

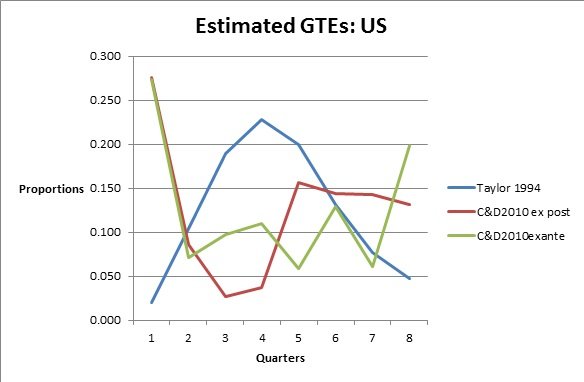

I have not put up the Coenen et al estimated GTE, because the quarter 4 proportion is so large. The Coenen et al and C&D agree that there is a large proportion (27%) of “flexible” prices that can change every quarter, whilst the proportion is just 2% for Taylor (wages). After that they also differ substantially. The Taylor peaks at 3-5 quarters (about 20% each), whilst C&D trough at 3-4 quarters. The C&D ex post has a very fat tail for quarters 5-8, with small proportions (less than 10%) for quarters 2-4. The C&D ex ante has large ends in quarters 1 and 8, with quarters 2-7 bouncing around 10%. Taylor dies away after 5 quarters.

Coenen et al come up with some other estimates for the GTE under alternative assumptions about the macroeconomic model: these vary. We have just reported the benchmark here.

Estimates from the Microdata.

I have used a few methods.

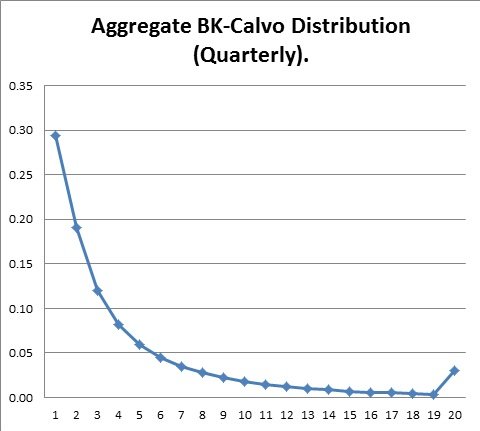

Method 1. Use frequency data and assume a Calvo distribution.

When Engin and I were first formulating the GTE in 2002, we had no data except for Taylor’s distribution which we called “Taylors US economy” which we used in early versions of our GTE paper. However, there was the price-stickiness table on Pete Klenow’s web-site with the frequency data for 350 CPI categories. We constructed a possible macroeconomics distribution using the formula for the cross-sectional Calvo distribution we had derived in ECB working paper 489 and which was published in Dixon and Kara JMCB 2006.

This distribution has a long flat tail. There are 14% with 9 quarters and over. As we showed in Dixon and Kara (2011), this long-tail can have a disproportionate effect on the way nominal prices respond in the whole economy.

This method is quite simple and can be done with any frequency data at any level of aggregation. As Dixon and Tian (2012) show, for the UK case at least, this is a good approximation: with highly disaggregated frequency data, the mean and median of the artificial Calvo distribution can equal the actual one. The main differences between the true distribution and the artificial Calvo are (a) there are more flexible prices in the data than in the Calvo, and (b) there is no 12 month peak in the artificial Calvo. However, since DSGE models use quarterly data, the differences are to some extent “smoothed out” and as a result the differences between the true data and the artificial Calvo may not matter that much (Dixon and Tian (2012)).

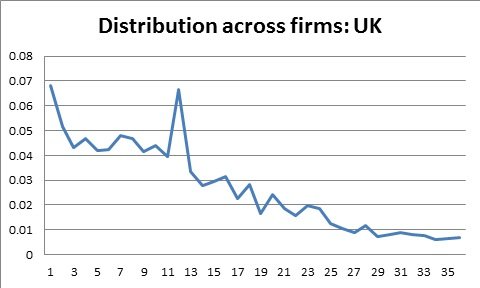

Method 2. Use the micro-price data (e.g. CPI, PPI).

Method 1 has a weakness: from the frequency there are many possible distributions consistent with a given frequency. To get the actual Distribution you need access to a microdata set such as the CPI. The best way to estimate it is to estimate the Hazard function: as Gabriel and Reiff (2008) showed, this is a robust way to deal with censoring. I have used this in Dixon and Le Bihan (2012), Dixon and Tian (2012) and Dixon (2012). The hazard function\(h(i)\) gives the probability that a price spell ends after \(i\) periods. The converse concept is the survivor function \(S(i)\), that gives the probability that a price spell will last at least \(i\) periods.

If we assume that there is a steady-state, then there are a series of identities that link together the distributions of durations: in particular the hazard function is related to the cross-sectional distribution of completed spell lengths by the following identity:

\[\alpha_i = i.\bar{h}.h\left(i\right).S\left(i\right),\]

where \(\bar{h}\) is the proportion of firms changing price each period – in Dixon 2012 it is shown that \(\bar{h} = \left(\sum^F_{i=1}S\left(i\right)\right)^{-1}\).

The above gives the distribution derived from the UK data using the Hazard function in Dixon (2012). The horizontal axis is months, the vertical the share for each month (we only show up to 36 months). As we can see, there is a 12 month “peak” and in general the distribution is declining. However, there is a fat tail of prices that change very infrequently.

Appendix: Taylor's US economy

Taylor effectively estimated the age distribution, from which he derived the DAF. For completeness, I report the full table with the corresponding hazard and survivor function along with the distribution of durations. This table (with different notation) first appeared in Dixon (2006), example 5, page 19.

\(S\left(i\right)\) |

\(h\left(i\right)\) |

age |

DAF |

dist. |

1.0000 |

0.0738 |

0.272 |

0.02 |

0.0738 |

0.9262 |

0.2072 |

0.252 |

0.104 |

0.1919 |

0.7343 |

0.3166 |

0.199 |

0.189 |

0.2325 |

0.5018 |

0.4191 |

0.136 |

0.228 |

0.2103 |

0.2915 |

0.5063 |

0.079 |

0.200 |

0.1476 |

0.1439 |

0.5641 |

0.039 |

0.132 |

0.0812 |

0.0627 |

0.6471 |

0.017 |

0.077 |

0.0406 |

0.0221 |

1.0000 |

0.006 |

0.048 |

0.0221 |

\(\bar{h}\) |

||||

0.2715 |

||||

| Table: The Hazard, Survivor and distributions for Taylor’s US economy. | ||||

Copyright © 2009-2020 huwdixon.org All Rights Reserved

ORCID ID: 0000-0002-9875-8965; Scopus ID: 7101709262.